The Paper

Lin, Y. K., & Maruping, L. M. (2025). Organizing for AI Innovation: Insights From an Empirical Exploration of US Patents. MIS Quarterly, 49(3), 1095-1122.

Our official headshots are available on our college website:

Authors (Interviewees)

Yu-Kai Lin, Georgia State University

Likoebe M. Maruping, Georgia State University

Contributors (Interviewers)

Mathilda Oladimeji: PhD Student, Louisiana State University

Email: ooladi2@lsu.edu

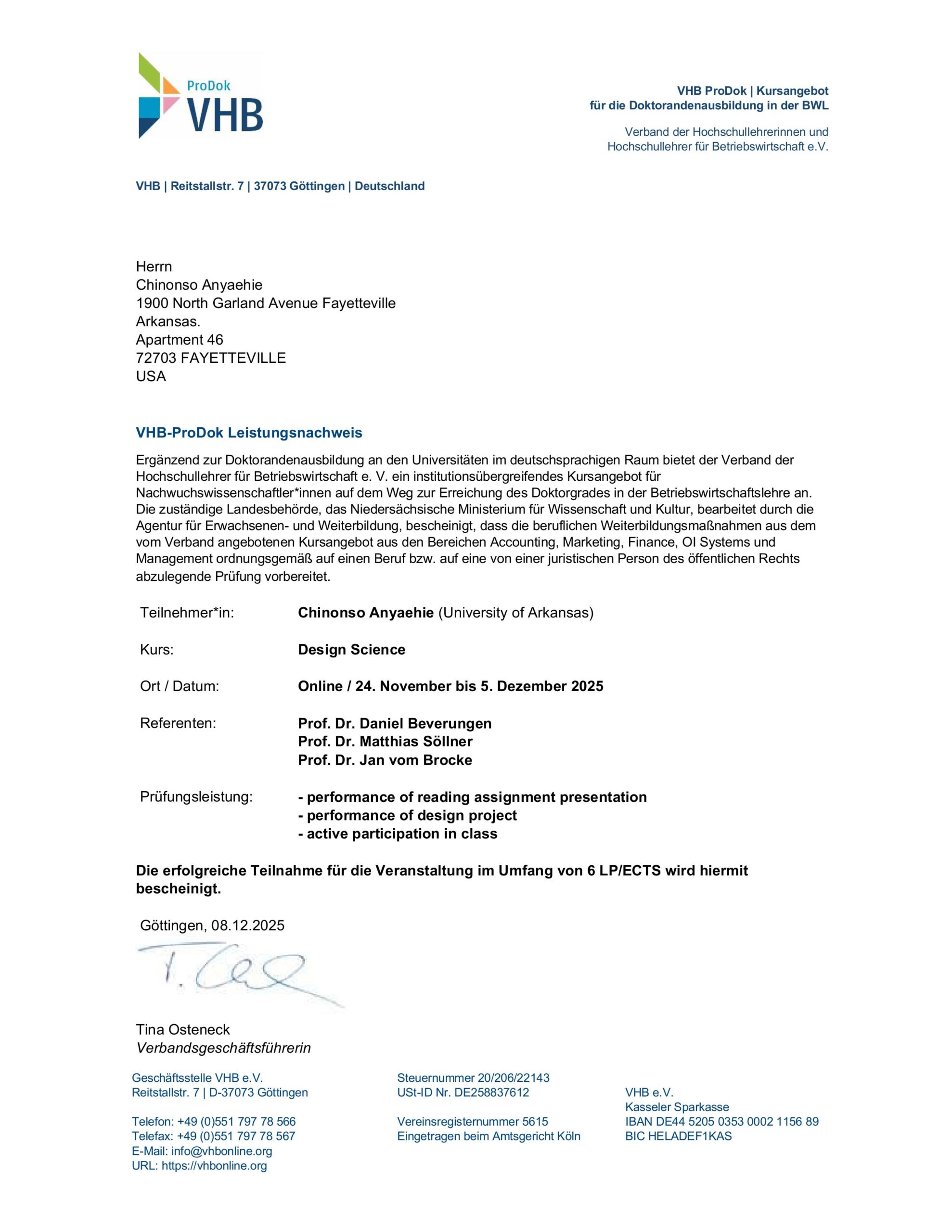

Chinonso Anyaehie: PhD Student, University of Arkansas,

Email: canyaehie@walton.uark.edu

Interview Date: November 5th, 2025

Interview Opening Script (with Co-Coordinator Introduction)

Chinonso:

Thank you so much for taking the time to speak with us today, your article is a timely and important contribution to how we understand the management of AI within organizations. I found it especially insightful how you used patent data to uncover the differences between AI and traditional IT innovations. My name is Chinonso Anyaehie, and I’m a PhD student in Information Systems at the University of Arkansas. I’m joined by my colleague Mathilda Oladimeji, a PhD student in Information Systems at Louisiana State University. Together, we’re coordinating this conversation as part of an initiative to help doctoral students and IS scholars better understand how leading researchers develop and structure impactful studies, from the initial idea to theoretical framing and empirical execution. We’re truly honored to have you with us today, and we’re looking forward to hearing the story behind your work.

(Chinonso): What first sparked your interest in studying how organizations innovate around AI? Was there a particular event, observation, or intellectual curiosity that led you to this question?

(Yu-Kai Lin): The initial motivation for us, at least for me, was that we often heard people say, “AI is different from IT.” We already had several strong conceptual papers on the theoretical differences and on what makes AI unique. At the same time, Likoebe and I were both very interested in innovation research and data. There is a robust body of research on innovation that has developed over decades. For me, I was trying to figure out a way to bridge that innovation literature with this emerging, novel category of innovation. That was one of the main motivations for me.

(Likoebe M. Maruping): I completely agree. In many ways, the motivation came from a mix of things: an event, a set of observations, and intellectual curiosity. The first factor was the emergence and rapid proliferation of artificial intelligence as a technology. Of course, this did not happen in a vacuum; it unfolded in an environment where information technologies were already well established. That led us to ask whether there was something about AI that truly required a new way of looking at it, especially in a comparative sense.

The second factor came from everyday observation. While there was a lot of hype around AI and how different it was, there were also many reports of organizations struggling to realize returns on their AI investments. That made us wonder if we needed to go back to first principles, rather than simply accepting untested assumptions about AI’s difference and its implications. Finally, our field has a long history of theory and research on innovation and on organizing for IT innovation. So the question became: can we simply apply what we know about IT to AI? And if not, what would it mean to organize differently for AI? Thinking that through might also help us understand why so many organizations struggle to innovate with AI. In summary, that was the essence of our motivation for this work.

(Mathilda) What made you select this specific context? What was the reason you chose patent data in particular?

(Yu-Kai Lin): There are several reasons supporting this selection. Patent data is a widely used domain among both innovation and information systems (IS) researchers to study innovation. Our approach aligns with this stream of research, as patents represent a well-established source of intellectual property for understanding innovation outcomes. Therefore, our use of patent data is consistent with much of the existing literature in innovation research. A second reason is that AI has existed since the 1950s and has evolved significantly over the decades, particularly in business applications. Patents offer a stronger way to capture these longitudinal innovation outcomes. During our review process, some reviewers suggested using open-source data; however, open-source repositories typically cover only the last two decades, while AI’s development spans much longer. Hence, patents were an intuitive choice to capture this extended timeline and to differentiate between AI and IT innovations.

(Likoebe M. Maruping): Furthermore, examining the phenomenon at a granular level requires detailed data, and patents provide precisely that level of specificity in the innovation claims they document.

(Chinonso): Working with patent data can be powerful but also very complex. What were the biggest intellectual and technical challenges you faced in collecting, classifying, and interpreting the data?

(Yu-Kai Lin): There are two main aspects to this question. The first concerns how we approached the question itself, which was how to differentiate AI from IT. If you read the paper, you will notice that its format is quite different from conventional studies that have hypotheses, followed by analysis and implications. Our study did not have a well-established theoretical framework to guide specific hypotheses, so we started from empirical discovery. We began with the data, identified regularities through observation, and then developed propositions at the end. It was my first time applying this approach, and it was a very interesting experience.

The second aspect concerns the patent data itself. The U.S. Patent and Trademark Office now make it easy for the public to access patent data. Five or six years ago, when I worked with patent data, it took much more effort to collect and clean it. Today, you can conveniently download it directly from their database. The real challenge, however, was computational. To quantify radicalness, we examined how a patent’s combination of citations differed from other patents, essentially how knowledge elements were recombined. Innovation can be thought of as the recombination of knowledge, and citations represent that combination. Calculating these differences required extensive computation. In our replication package, I even noted in the README file that computing these quantities took several months of runtime. That was the most challenging part, managing the heavy computational load. The good thing is that patent data are freely available for anyone to use.

A (Likoebe M. Maruping): I think Yu-Kai captured it perfectly. I would just add that beyond the temporal advantage of covering decades of innovation, patent data also allow us to observe phenomena at a very granular level, down to the specific patent claims. That level of detail was crucial for distinguishing between AI and IT innovations.

(Mathilda) What findings surprised you the most as the analysis unfolded? Was there anything in the data that challenged your initial assumptions?

(Likoebe M. Maruping): It was less about surprises and more about validation that strengthened our confidence in the research. Initially, we aimed to distinguish between AI-related and IT-related patents, and much of this work was done manually identifying a set of AI keywords. Shortly after, we found a paper that had already conducted a machine learning–based dot classification of these patents. From a replicability standpoint, this provided a strong sense of reassurance, as our manual classification aligned with the results of prior research, demonstrating the robustness of our findings.

(Chinonso): How did you decide the paper was “good enough” to submit to MISQ? How long did that process take? What were the most challenging reviewer requests? Did they affect the final version? And what advice would you give to Ph.D. students aiming for MISQ?

(Yu-Kai Lin): Likoebe, being a senior editor at MISQ, can speak to this better, but I will start. Because the paper’s format was so unusual, we initially thought MISQ might not be the best fit. We first submitted it to two or three other journals. It was a difficult process because those outlets found our approach quite strange. MISQ was our fourth attempt. During the review process, many reviewers assumed this was a causal inference paper and asked us to discuss issues like endogeneity and mechanisms. But that was not the story we wanted to tell. We had to explain that our study was not about causal inference; it was about discovery, identifying empirical regularities, and using those to inform conceptual development, much like one would in case study research. That was challenging, but we were fortunate to have a supportive senior editor, Peter Gray, who understood the value of empirical discovery and how it can lead to future research that tests or refines propositions.

(Likoebe M. Maruping): Yes, exactly. When you asked that, we both smiled because the truth is, we did not know when it was “good enough for MISQ.” We had already tried three other journals, none of which thought it was ready. The other point is that all those journals were outside the information systems field, so audience fit may have been part of the issue. MISQ was the first venue where reviewers understood the IS phenomenon we were addressing. The biggest challenge was not outright resistance; it was the differing views on what the paper should be. We had one vision, and the reviewers had another. Much of the process was about reconciling those differences, and in the end, there was no complete reconciliation. We agreed to disagree.

At several points, we faced a choice. We could either completely follow the reviewers’ suggestions, which would have produced a fundamentally different paper, or stay true to our original vision. We chose the latter. Ultimately, the outcome came down to the editor’s judgment. Having a good senior editor who could manage diverging perspectives between us and the reviewers was crucial. The review team provided excellent feedback that improved the analyses, but the story direction remained our own. For Ph.D. students, my advice would be do not automatically yield to every reviewer request if it changes your story. Stay true to what you are trying to accomplish but do incorporate technical feedback that strengthens your paper. And if you find an editor who understands your vision, that is invaluable.

(Mathilda) How did you and your co-author complement each other’s thinking in developing this study? Were there moments when you saw the phenomenon differently?

(Yu-Kai Lin): This was a valuable learning experience. I am much more well-versed in empirical analysis, while Maruping contributed significantly to problem conceptualization and developing the right propositions, so there were strong complementarities between us.

(Likoebe M. Maruping): I completely agree that it was a great experience, especially in reconciling the link between conceptualization and measurable constructs. One of the most helpful aspects was the constant conversations we had to bridge what could be done with the data. For example, during the review process, we were asked what differentiates AI from IT. We spent time discussing which components within the patents classify an AI patent compared to an IT patent, and that’s where the magic of collaboration happens, it’s not just the additive sum of our individual strengths, but a truly synergistic effort.

Q (Chinonso): Behind every published paper is a story of discovery, uncertainty, and iteration. What part of your research journey do you think readers would find most interesting, but did not make it into the final paper?

(Yu-Kai Lin): MISQ has strict length requirements, so we had to leave out several interesting parts. As Likoebe mentioned earlier, to ensure robustness, we tested many alternative approaches for constructing our sample, operationalizing our outcome variables, and identifying AI patents. We found consistent results across approaches but could not include everything due to space limitations. One example is that we initially used textual analysis to identify radicalness. Earlier, I mentioned that we used citations to measure recombination, but it is also possible to use textual information from each patent document to identify knowledge components. I spent a lot of time on that analysis, but it did not make it into the final paper because of space constraints.

(Likoebe M. Maruping): Yes, that was a really fascinating one. That is the analysis where we used structural topic modeling. I really wish it had been included, not only because of the effort involved, but because it was such a compelling way to demonstrate radicalness.

(Mathilda) What are the most important implications for practice that this study could have?

(Likoebe M. Maruping): The first contribution lies in our propositions, which highlight that people cannot simply apply the same knowledge they have about managing IT projects and IT innovations, especially managers. This is even more relevant now, as there is no single model for innovating with AI; organizations are often figuring it out as they go. We hope our study provides some guidance for them in this process. The second contribution is that it challenges and validates common assumptions about AI. It’s human nature that when a discourse becomes widespread, its assumptions are often taken for granted. AI is frequently discussed as something radically different, but until now, there has been limited empirical work establishing whether that is truly the case.

(Yu-Kai Lin): We are not claiming that AI is incremental, but rather that it is more incremental than IT in a comparative sense. Our study’s comparative approach helps managers refine their process management practices to be more compatible with AI-driven innovation.

(Chinonso): If you were to advise a Ph.D. student who loved this paper and wanted to do a follow-up study, what would you suggest?

(Yu-Kai Lin): In our paper, we discussed several directions for future research. One avenue would be to empirically test the three sets of propositions we developed at the end of the paper. Another would be to explore other types of innovation outputs besides patents. Reviewers pointed out that patents might differ from other forms of innovation, so we encourage researchers to examine whether the relative differences between AI and IT innovations persist across other outputs.

(Mathilda) Many Ph.D. students are fascinated by AI but find it challenging to frame a publishable study. What advice would you give to early-career researchers who want to investigate AI-related topics?

(Likoebe M. Maruping):This is one of the main discussions even amongst our Ph.D. students, especially since being an AI researcher today means working in a crowded space where it can be difficult to stand out. While there’s no single right view, I believe it’s more advantageous to establish your identity as a researcher around the phenomenon rather than a particular technology. Technologies are constantly evolving artifacts; what’s in vogue today may fade tomorrow so there’s an inherent risk in tying your scholarly trajectory too closely to one technology. Therefore, my advice is to keep the focus on the underlying phenomena of interest, because as technologies evolve, there will always be new and important problems to study.

(Yu-Kai Lin): I think it’s natural for Ph.D. students to be drawn to AI-related topics, but it’s equally important to continually ask: what’s truly new about AI, and what unique impact does it have?

Author Profiles

Contributor Profiles

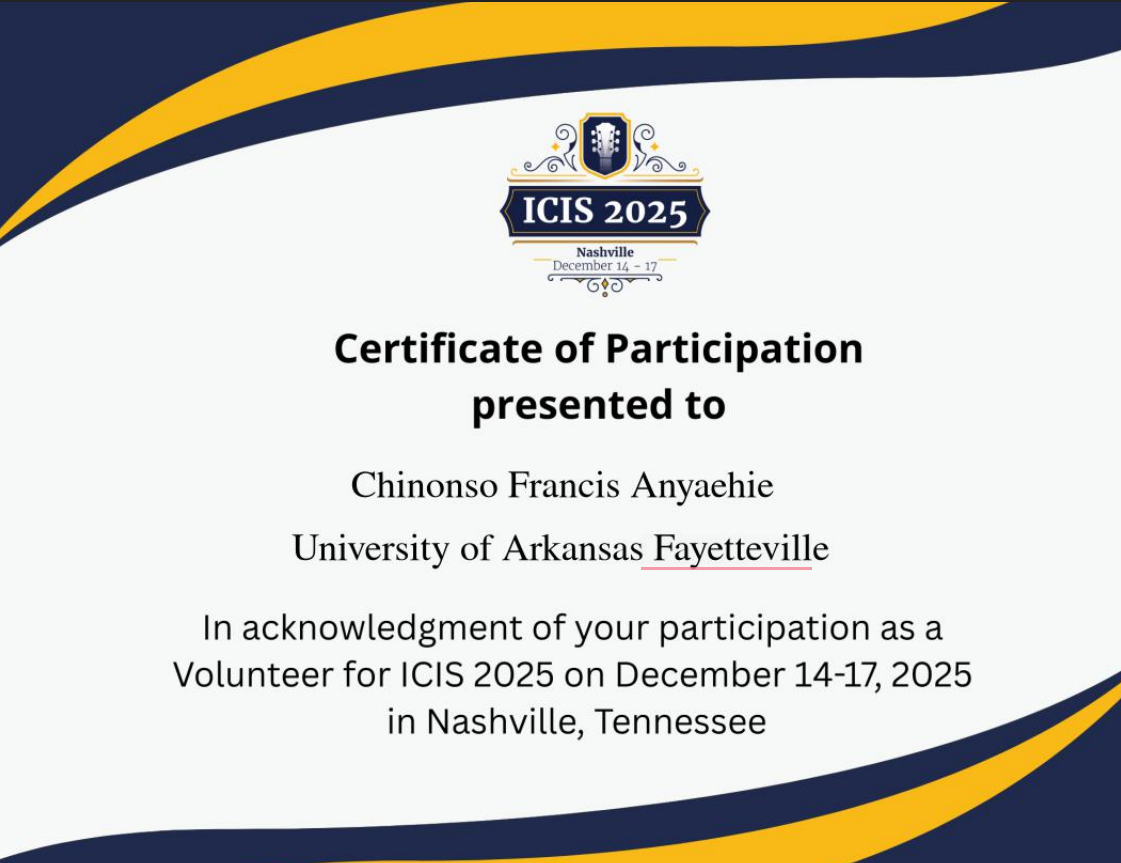

Chinonso Anyaehie is a visionary Information Systems Ph.D. scholar at the Sam M. Walton College of Business, University of Arkansas, exploring how emerging technologies, especially Artificial Intelligence reshape innovation, work, and human experience. His research blends computational, design, and behavioral perspectives to uncover how AI adoption unfolds in real organizational settings. A Contributor to MISQ Insider, Chinonso translates top-tier Information Systems research into accessible insights in partnership with MIS Quarterly. He is also a Sequence Analysis Association presenter and an ICIS 2025 presenter and volunteer, where he will showcase his paper “Science and Commerce in Quantum Computing: Toward an Oscillatory Theory of Ecosystem Emergence” and lead the Table Talk “Building Visibility for Emerging IS Scholars.” Beyond academia, Chinonso designs AI-augmented learning systems and leads innovation platforms including ISResearcher.com, BusinessLeaders.ai, and ISScholar.com—each amplifying research visibility and advancing human-centered AI adoption worldwide.

Mathilda Oladimeji is a doctoral student in Information Systems at Louisiana State University (LSU). She holds a Master of Business Administration from LSU in 2024 and has been recognized with several competitive awards, including the Oskar Morgenstern Fellowship for scholars trained in quantitative methods and the Shell Graduate Summer Research Fellowship. Her research focuses on human behavior and technology use. She has served as a reviewer for the International Journal of Information Management, a MISQ Insider contributor, and has published in the proceedings of the 2025 AMCIS and ICIS.

Leave a Reply